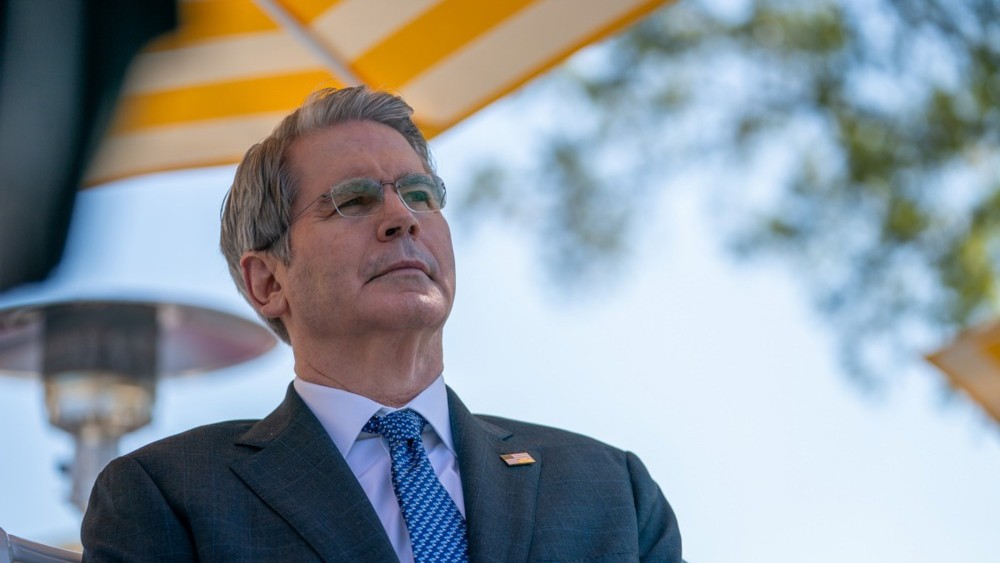

On April 7th, U.S. Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell convened an urgent meeting with the leaders of Citigroup, Morgan Stanley, Bank of America, Wells Fargo, and Goldman Sachs. The reason was not a data breach or leak — but a public announcement about a new artificial intelligence model.

What is Mythos and why you don't have it yet

Anthropic announced the Project Glasswing initiative based on Claude Mythos Preview — a model capable of autonomously identifying software vulnerabilities at scale. Access was granted only to select entities: Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan Chase, Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks. The general public is not among them.

Claude Mythos2 Preview is a general-purpose model that has not yet been released, but demonstrates an uncomfortable fact: AI has reached a level where it surpasses everyone except the most highly skilled people in finding and exploiting vulnerabilities.

"We did not intentionally teach Mythos Preview these capabilities. They emerged as a side effect of general improvements in code, reasoning, and autonomy"

— Anthropic, Project Glasswing technical report

In other words: the model learned to break systems while being trained to write better code.

What exactly has it already done

Within weeks, Mythos Preview identified thousands of zero-day vulnerabilities — previously unknown to developers — in every major operating system and every major browser, as well as in a range of other critical software.

Each documented exploit was written entirely autonomously, without any human intervention after the initial request. Mythos received a list of 100 CVEs and known Linux kernel vulnerabilities and independently selected 40 that were potentially exploitable.

Over 99% of discovered vulnerabilities remain unfixed, which is why Anthropic considers their public disclosure irresponsible. The model that knows where the "holes" are is not being released to the network — but details about it have already leaked previously due to human error in storing data in a publicly accessible cache.

Anthropic's logic: attack first to defend

Project Glasswing is an "urgent attempt" to use frontier model capabilities to defend before similar capabilities fall into the hands of hostile actors. Anthropic is allocating up to $100 million in model usage credits and $4 million in direct donations to open-source security organizations.

There is also a purely pragmatic argument for such logic: the same capabilities that make AI models dangerous in hostile hands make them invaluable for finding and fixing vulnerabilities in critical software.

- Defenders have gained an advantage now — but a temporary one: frontier model capabilities will likely improve significantly within the next few months.

- The banking system is among the priority targets: this is why the Treasury Department gathered CEOs, not technical directors.

- Open source is the weakest link: open source comprises the vast majority of code in modern systems, including those used by AI agents themselves to write new software.

The question is not whether someone will find these vulnerabilities. Anthropic has already found them. The question is whether 99% of unfixed holes can be patched before a similar model ends up in the hands of someone who won't schedule meetings with bankers, but will act immediately — and at what cost the first attack will come if this doesn't happen.